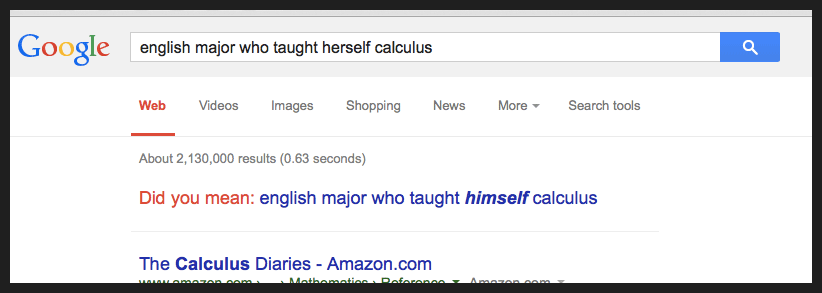

When I googled “english major who taught herself calculus,” Google gave me the result I wanted — plus a most unhelpful suggestion. I wondered why.

Last Thursday morning, I was following up on a book recommendation from sometime-TED-blogger Ben Lillie. A writer he knows, an English major, taught herself calculus and wrote a book about it. Don’t you want to read that book? I sure did. But I couldn’t remember the writer’s name that morning. I did, however, remember the plot of the book. So I searched for “english major who taught herself calculus.”

And Google asked:

Did you mean: “english major who taught himself calculus”?

It was just so weird, I took a screengrab and tweeted it. What was there to say in comment besides: “No Google, no I didn’t.”

Then I went off to a meeting. When I came back, my username was trending in New York, right behind “Metta.” (It was the day basketball player Metta World Peace changed his name to The Panda’s Friend.) I turned off alerts on my phone. The tweet was going viral.

In hindsight I was very lucky over that day and the next few days as replies and RTs rolled in. Pointing out bias in the media can lead to strong reactions from people who feel personally accused, even if the bias can’t possibly be their personal fault. And I’ve worked in social media long enough to know that a sour comment — even from the most obvious troll — can have a physical effect on you for an hour or so. Your ears ring, your face goes red, you ruminate. And I didn’t want that happening while I was leading my staff meeting, so I stayed off Twitter for most of the day.

But as it turned out, I didn’t see a lot of backlash and hate. Because someone big and untouchable seemed to be to blame: Google.

People were tweeting “sexist google” and “shame on google” and stuff — and honestly, that bugged me too. A blogger suggested that I was “rather offended,” but really, I wasn’t. I thought the suggestion was dumb and unnecessary, sure. Especially since the first search result was exactly the book I was looking for, Jen Oulette’s Calculus Diaries — and I made sure my screengrab showed that.

But algorithms are machines. If you don’t like the result a machine gives you, don’t waste time being offended — find the people who made the algorithm, and suggest they might want to teach it a better behavior. We all have that power.

Please know, I’m not justifying Google actively suggesting what it suggested. But I don’t suggest it was malicious or deliberate.

I heard from Google Press pretty quickly (though, um, via Google+, so it took a few days to actually track their response down), and here’s what they wrote:

We saw your tweet — just wanted to reach out and explain a bit here.

If you search for “taught himself calculus” (in quotes), you’ll see it’s a particularly popular phrase on the web, appearing about 282,000 times — because there’s a popular story out there that Einstein taught himself physics as a teenager (and a recent news story that a kid in Indiana did too). “Taught herself calculus” appears only around 4,000 times. So when Google sees two phrases like this and one is much more common, it may suggest it as a possible alternate search. It’s more complex than that, but that’s essentially why you’re seeing this result. (Darn you, Einstein!)

Clearly we want our algorithms to be smarter and actually understand the meaning of language better, which is why we’re investing a lot in cutting-edge computer science techniques like natural language understanding… but those are 50-year-on A.I. problems that will take years yet to solve.

In the meantime, we’re also huge supporters of getting more women into STEM fields, which is why we support programs like Made With Code (and many more).

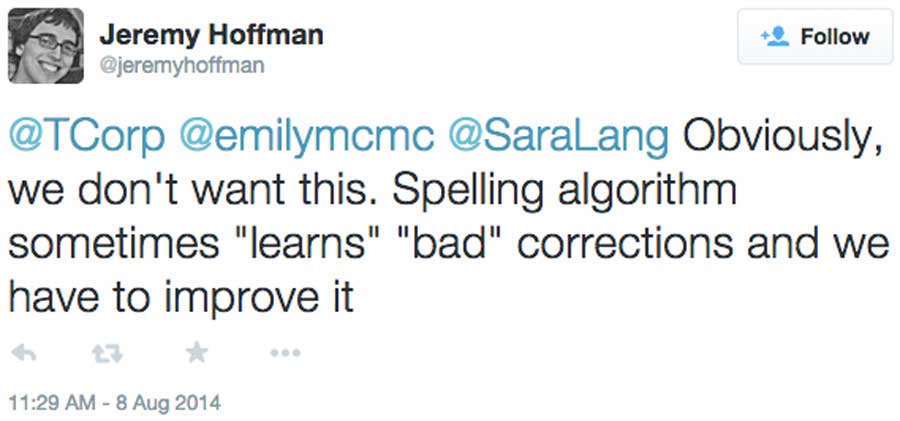

Another engineer from Google tweeted about why users were sometimes seeing a red squiggly line under the word “herself,” which I didn’t see but some users did when they fact-checked it:

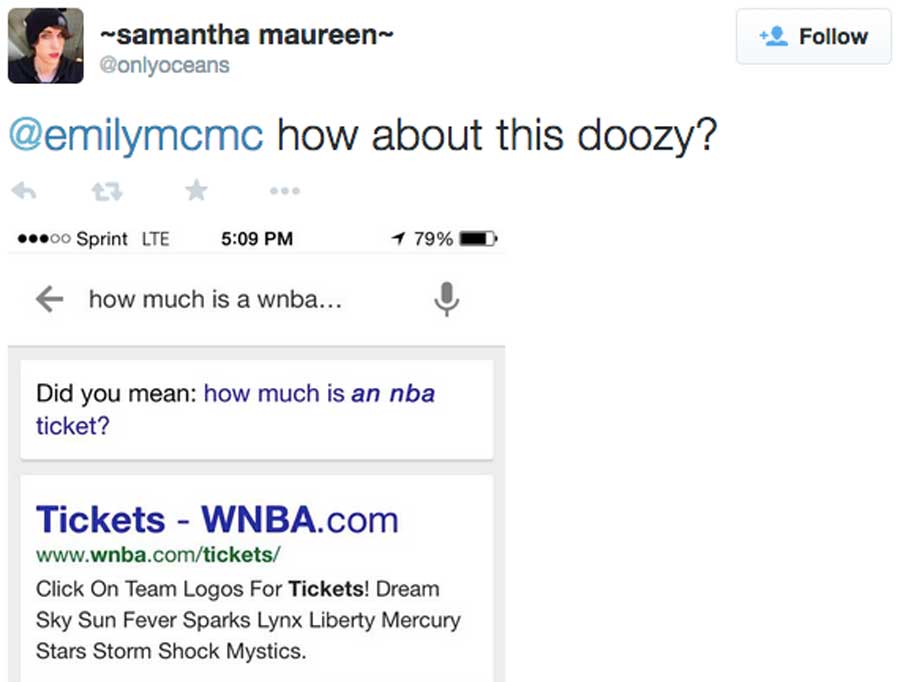

Meanwhile, of course, people tried every variation of this search. My favorite:

And every one of these results should be tweeted, should be shared. We are Google’s customers, as well as their dataset. Somewhere in their machine, there’s a little AI that should ask: Am I suggesting replacing a female-gendered term with a male one for a good reason? Do our customers actually want me to do that? And does this reflect our own values as a company? (Some interesting thinking here: “Help, my algorithm is a racist.”)

So, as unhelpful as this search result was, I want to think there are people at Google working on this problem — now that it has been retweeted 3,500 times.

The whole situation makes me think of TED’s own past problems with unbalanced speaker lineups. TED had plenty of perfectly plausible reasons in earlier years for lineups that didn’t reflect diversity. We could have gone on excusing ourselves. But our audience clearly told us, an unbalanced lineup isn’t what our customers want. And our staff said, unbalanced lineups don’t reflect our values as a company. TED is actively addressing lineup balance, and it starts from the ground up, with things like TED-Ed Clubs in schools, open auditions and active, persistent recruiting. So I empathize, and that’s why I reproduced Google’s answer in full above, including their pitch for Made With Code. It’s all part of the solution.

If Google’s AI can recognize a cat (and Microsoft’s can recognize a corgi), perhaps it can also learn to recognize a problem to be solved.

Two last thoughts:

I should probably ask Jennifer Ouelette how many copies of her book have sold in the past week, after 3.5k people basically retweeted the plot.

And a truly great thing was seeing how many people fact-checked this search result for themselves before they shared it. Fact-checking ftw!